Last weekend The Independent published a ridiculous piece of antivaccine scaremongering by Paul Gallagher on their front page. They report the story of girls who became ill after receiving HPV vaccine, and strongly imply that the HPV vaccine was the cause of the illnesses, flying in the face of massive amounts of scientific evidence to the contrary.

I could go on at length about how dreadful, irresponsible, and scientifically illiterate the article was, but I won’t, because Jen Gunter and jdc325 have already done a pretty good job of that. You should go and read their blogposts. Do it now.

Right, are you back? Let’s carry on then.

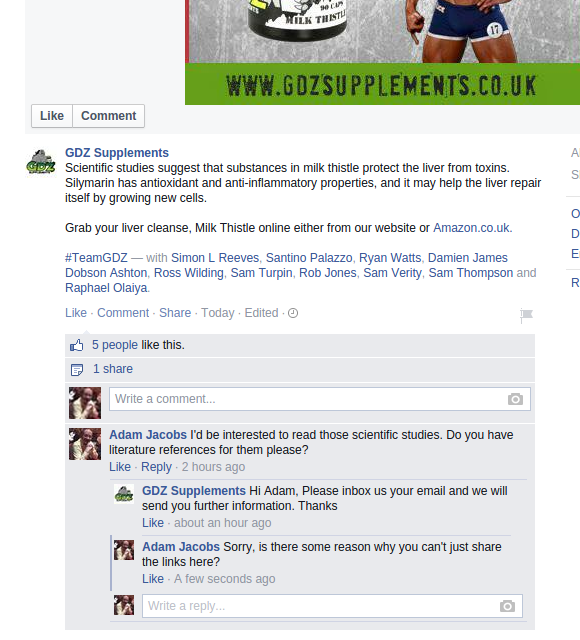

What I want to talk about today is the response I got from the Independent when I emailed the editor of the Independent on Sunday, Lisa Markwell, to suggest that they might want to publish a rebuttal to correct the dangerous misinformation in the original article. Ms Markwell was apparently too busy to reply to a humble reader, so my reply was from the deputy editor, Will Gore. Here it is below, with my annotations.

Dear Dr Jacobs

Thank you for contacting us about an article which appeared in last weekend’s Independent on Sunday.

Media coverage of vaccine programmes – including reports on concerns about real or perceived side-effects – is clearly something which must be carefully handled; and we are conscious of the potential pitfalls. Equally, it is important that individuals who feel their concerns have been ignored by health care professionals have an outlet to explain their position, provided it is done responsibly.

I’d love to know what they mean by “provided it is done responsibly”. I think a good start would be not to stoke anti-vaccine conspiracy theories with badly researched scaremongering. Obviously The Independent has a different definition of “responsibly”. I have no idea what that definition might be, though I suspect it includes something about ad revenue.

On this occasion, the personal story of Emily Ryalls – allied to the comparatively large number of ADR reports to the MHRA in regard to the HPV vaccine – prompted our attention. We made clear that no causal link has been established between the symptoms experienced by Miss Ryalls (and other teenagers) and the HPV vaccine. We also quoted the MHRA at length (which says the possibility of a link remains ‘under review’), as well as setting out the views of the NHS and Cancer Research UK.

Oh, seriously? You “made it clear that no causal link has been established”? Are we even talking about the same article here? The one I’m talking about has the headline “Thousands of teenage girls enduring debilitating illnesses after routine school cancer vaccination”. On what planet does that make it clear that the link was not causal?

I think what they mean by “made it clear that no causal link has been established” is that they were very careful with their wording not to explicitly claim a causal link, while nonetheless using all the rhetorical tricks at their disposal to make sure a causal link was strongly implied.

Ultimately, we were not seeking to argue that vaccines – HPV, or others for that matter – are unsafe.

No, you’re just trying to fool your readers into thinking they’re unsafe. So that’s all right then.

Equally, it is clear that for people like Emily Ryalls, the inexplicable onset of PoTS has raised questions which she and her family would like more fully examined.

And how does blaming it on something that is almost certainly not the real cause help?

Moreover, whatever the explanation for the occurrence of PoTS, it is notable that two years elapsed before its diagnosis. Miss Ryalls’ family argue that GPs may have failed to properly assess symptoms because they were irritated by the Ryalls mentioning the possibility of an HPV connection.

I don’t see how that proves a causal link with the HPV vaccine. And anyway, didn’t you just say that you were careful to avoid claiming a causal link?

Moreover, the numbers of ADR reports in respect of HPV do appear notably higher than for other vaccination programmes (even though, as the quote from the MHRA explained, the majority may indeed relate to ‘known risks’ of vaccination; and, as you argue, there may be other particular explanations).

Yes, there are indeed other explanations. What a shame you didn’t mention them in your story. Perhaps if you had done, your claim to be careful not to imply a causal link might look a bit more plausible. But I suppose you don’t like the facts to get in the way of a good story, do you?

The impact on the MMR programme of Andrew Wakefield’s flawed research (and media coverage of it) is always at the forefront of editors’ minds whenever concerns about vaccines are raised, either by individuals or by medical studies. But our piece on Sunday was not in the same bracket.

No, sorry, it is in exactly the same bracket. The media coverage of MMR vaccine was all about hyping up completely evidence-free scare stories about the risks of MMR vaccine. The present story is all about hyping up completely evidence-free scare stories about the risk of HPV vaccine. If you’d like to explain to me what makes those stories different, I’m all ears.

It was a legitimate item based around a personal story and I am confident that our readers are sophisticated enough to understand the wider context and implications.

Kind regards

Will Gore

Deputy Managing Editor

If Mr Gore seriously believes his readers are sophisticated enough to understand the wider context, then he clearly hasn’t read the readers’ comments on the article. It is totally obvious that a great many readers have inferred a causal relationship between the vaccine and subsequent illness from the article.

I replied to Mr Gore about that point, to which he replied that he was not sure the readers’ comments are representative.

Well, that’s true. They are probably not. But they don’t need to be.

There are no doubt some readers of the article who are dyed-in-the-wool anti-vaccinationists. They believed all vaccines are evil before reading the article, and they still believe all vaccines are evil. For those people, the article will have had no effect.

Many other readers will have enough scientific training (or just simple common sense) to realise that the article is nonsense. They will not infer a causal relationship between the vaccine and the illnesses. All they will infer is that The Independent is spectacularly incompetent at reporting science stories and that it would be really great if The Independent could afford to employ someone with a science GCSE to look through some of their science articles before publishing them. They will also not be harmed by the article.

But there is a third group of readers. Some people are not anti-vaccine conspiracy theorists, but nor do they have science training. They probably start reading the article with an open mind. After reading the article, they may decide that HPV vaccine is dangerous.

And what if some of those readers are teenage girls who are due for the vaccination? What if they decide not to get vaccinated? What if they subsequently get HPV infection, and later die of cervical cancer?

Sure, there probably aren’t very many people to whom that description applies. But how many is an acceptable number? Perhaps Gallagher, Markwell, and Gore would like to tell me how many deaths from cervical cancer would be a fair price to pay for writing the article?

It is not clear to me whether Gallagher, Markwell, and Gore are simply unaware of the harm that such an article can do, or if they are aware, and simply don’t care. Are they so naive as to think that their article doesn’t promote an anti-vaccinationist agenda, or do they think that clicks on their website and ad revenue are a more important cause than human life?

I really don’t know which of those possibilities I think is more likely, nor would I like to say which is worse.